Kinect didn’t die, it just changed forms. Today at its annual Build developers conference, Microsoft announced Project Kinect for Azure saying that the sensor array will have all the capabilities we’re familiar with, but in a smaller more power-efficient package. Meaning, time of flight depth sensors, IR sensors and more, now with Azure AI. “Building on Kinect’s legacy that has lived on through HoloLens, Project Kinect for Azure empowers new scenarios for developers working with ambient intelligence,” Microsoft said.

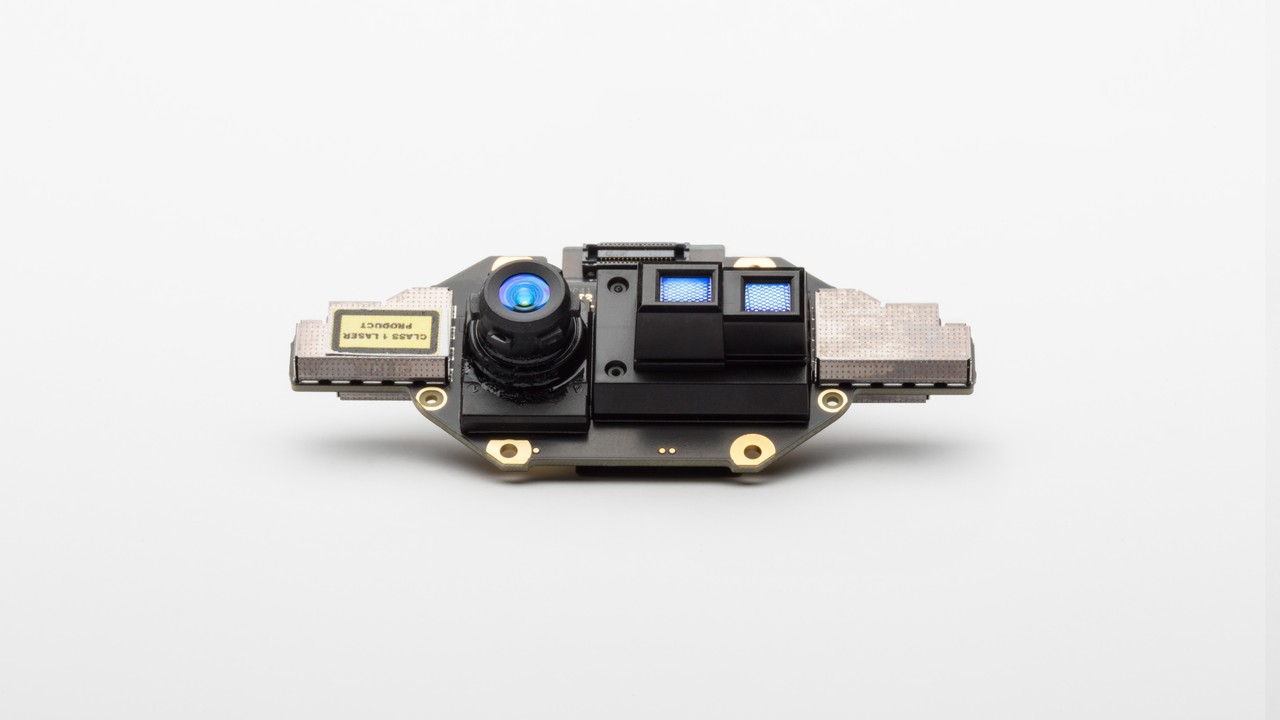

As you can tell from the image below, the new Kinect is much smaller than the one that came before it. The depth sensor resolution got a bump to 1024×1024, up from 640×480 on the sensor that shipped with Xbox One. This new Kinect should work better in full sunlight as well, which could result in the sensor being used in even more places.

All told, it’s the fourth iteration of the device. Each time Microsoft has retooled it, the application has changed. Prior to this, the tech was implemented into HoloLens. In 2018, it’s being used for AI and smart sensors that jack into the IoT Edge and machine learning. We’ve seen what artists and game developers can do with Kinect, now it looks like we’ll find out what happens when it’s used for AI.

From the stage, CEO Satya Nadella said the aim for the newest Kinect is to have the “best spatial understanding, skeletal tracking, object recognition and package some of the most powerful sensors together with the least amount of depth noise.” He expects it to have myriad applications in consumer-facing and infratstructure situations.

In our Build preview, we said to expect more about Azure during today’s event, and, well, here’s the first evidence that Microsoft is using everything at its disposal to build its cloud ecosystem — even the sensor that gamers and Redmond alike abandoned.

Source: engadget.com